The Telegraph: Information Becomes Electrical

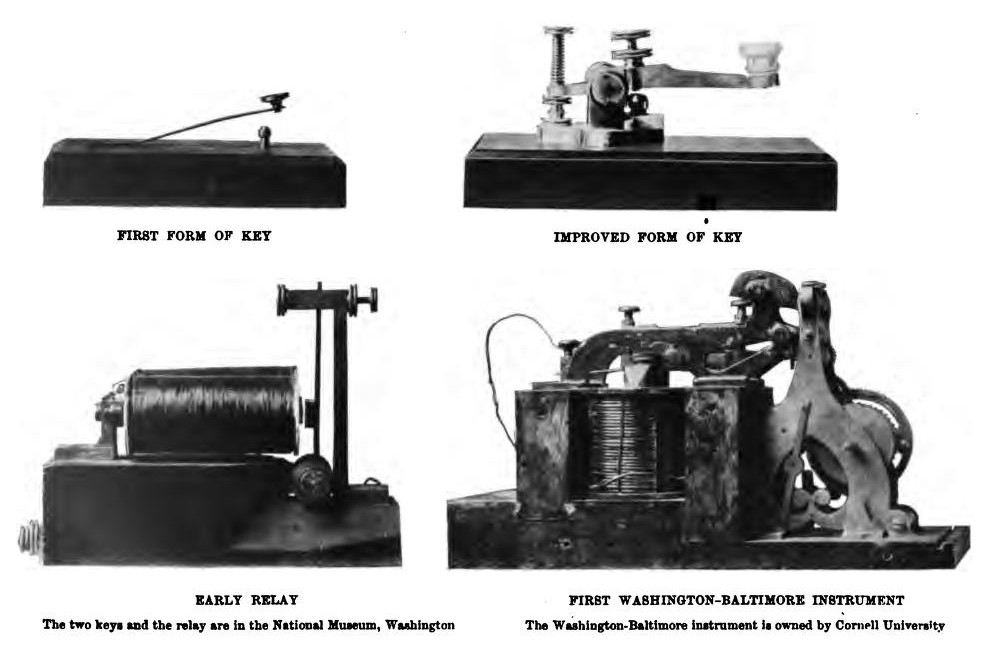

On 24 May 1844, Samuel Morse sat in the U.S. Capitol building in Washington and tapped out four words in his code: What hath God wrought. The message travelled instantly along 61 kilometres of wire to Baltimore. A new age had begun — not just of communication, but of encoding.

Morse's genius was not the wire or the battery. It was the idea that information could be reduced to a small alphabet of symbols — dots, dashes, and silences — and that these symbols could be mapped onto electrical pulses. This is, at its core, exactly what every modem ever built has done. The vocabulary changes; the principle does not.

The commercial telegraph networks that spread across continents in the 1850s and 1860s were the first data networks in history. By 1866, a working transatlantic cable connected Europe and North America. Messages that had taken weeks by ship now arrived in minutes. The world had shrunk, and engineers were already asking: how much faster can we go?

Baudot and the Teletype: Speed Through Encoding

Morse code had a problem: it required a skilled human operator at each end. Speed was limited by human fingers and human ears. In 1870, French telegraph engineer Émile Baudot proposed a different approach. Instead of a variable-length code, he defined a fixed 5-bit binary code for each letter of the alphabet — 32 possible combinations, enough for the letters, numbers, and basic punctuation needed in a telegram.

Baudot's encoding was revolutionary for two reasons. First, it could be sent mechanically, without a trained operator reading and tapping. Second, it introduced the concept of a fixed symbol rate — a certain number of code elements transmitted per second. The unit of symbol rate that bears his name, the baud, is still used today. When you read that a modem operates at 3200 baud, you are using Baudot's unit, over a century after he defined it.

By the early 20th century, the teletype machine — essentially a typewriter connected to a Baudot telegraph line — had become standard infrastructure for news agencies, stock exchanges, military communications, and government departments worldwide. The teletype network was, in a meaningful sense, the first wide-area text network: a global system for transmitting written information electrically at machine speed.

Harry Nyquist and the Bandwidth Theorem (1928)

By the 1920s, telephone networks were expanding rapidly, and engineers at Bell Telephone Laboratories were asking a precise question: given a telegraph or telephone channel of a certain bandwidth, what is the maximum number of symbols per second it can carry? The answer came from a Swedish-born engineer named Harry Nyquist.

Imagine a channel — a wire, a phone line, a radio frequency band — that can carry signals up to a maximum frequency of B hertz. Nyquist proved in 1928 that such a channel can carry at most 2B distinct symbols per second without the symbols interfering with each other.

In concrete terms: a telephone line with a usable bandwidth of about 3000 Hz can carry at most 6000 symbols per second. If you try to send faster, the symbols blur together and become unreadable.

This maximum symbol rate — 2B — is called the Nyquist rate. It is an absolute upper limit set by physics, not by engineering. No clever circuit design can exceed it on a given channel.

But notice: Nyquist says nothing about how much information each symbol carries. A symbol could represent one bit (0 or 1), or four bits (16 possible values), or eight bits (256 possible values). The more levels you pack into each symbol, the more information travels at the same symbol rate. This was the key insight that modem designers exploited for decades.

Nyquist's theorem set the physical framework within which every modem designer since 1928 has worked. When Hayes engineered the Smartmodem, when Rockwell designed the 56k chipset, when Qualcomm built 5G modems — they were all working inside the boundaries Nyquist described.

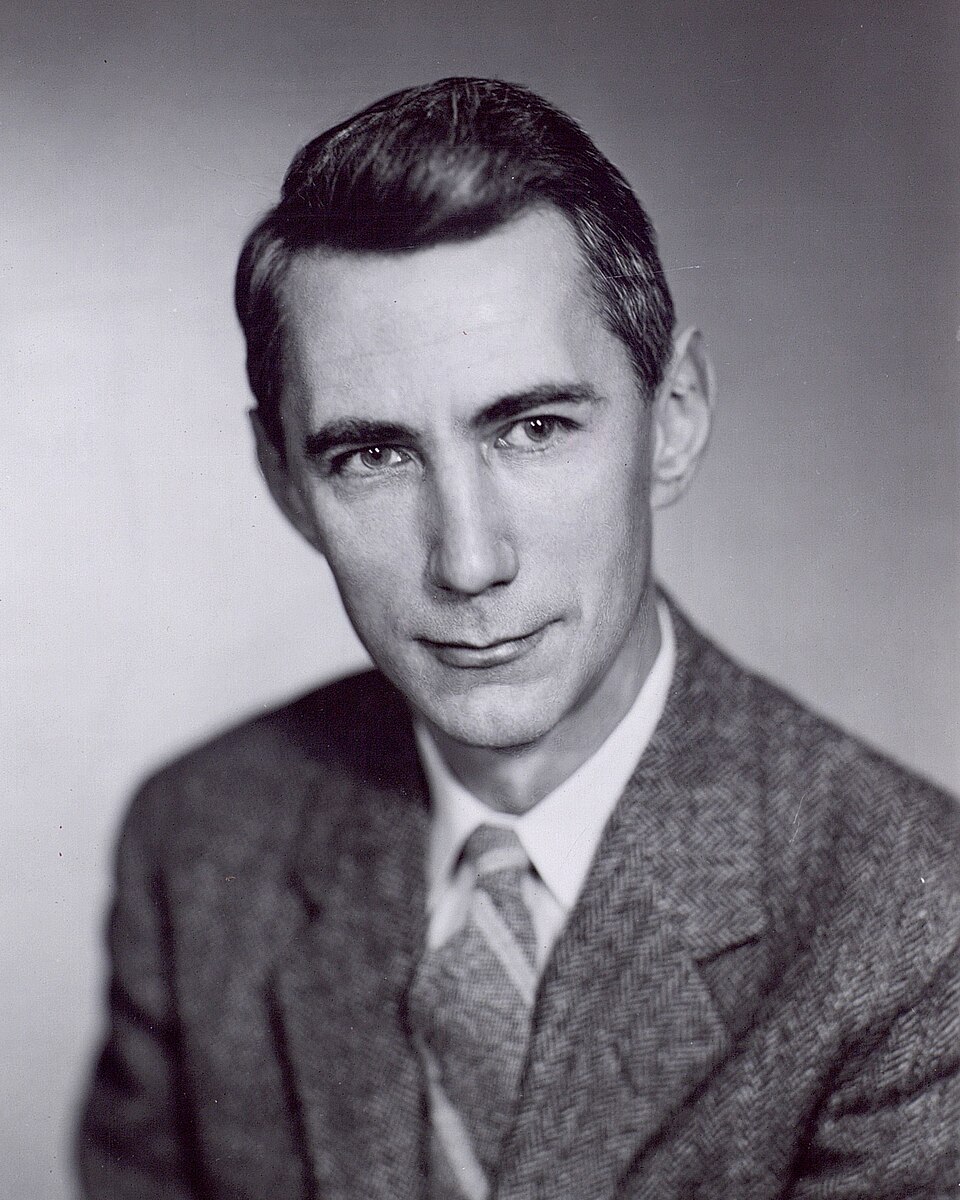

Claude Shannon and the Information Limit (1948)

Nyquist told engineers how fast they could send symbols. But he left open a deeper question: given that every real channel has noise — static, interference, random fluctuations — how much useful information can actually get through? This question was answered in 1948 by Claude Shannon, a mathematician at Bell Labs, in a paper that founded the entire field of information theory.

Shannon showed that the maximum information rate of a noisy channel — now called the channel capacity — depends on two things: the bandwidth of the channel, and the ratio of signal power to noise power (the SNR, signal-to-noise ratio).

The relationship is: the higher the bandwidth, and the cleaner the signal compared to the noise, the more information you can reliably push through. Double the bandwidth, and the capacity roughly doubles. Double the signal-to-noise ratio, and you gain somewhat less — the returns are logarithmic.

For a typical telephone line in the 1990s — about 3000 Hz of bandwidth, with a signal-to-noise ratio of around 35 dB — the Shannon limit works out to approximately 35,000 bits per second. This was believed for years to be the ceiling for dial-up modems.

The 56k modem of the late 1990s appeared to violate this limit. It did not — it cleverly reframed the problem by treating the digital telephone exchange as part of the channel, avoiding the analogue-to-digital conversion noise that Shannon's limit assumed. But that story belongs to Epoch IV.

Shannon's 1948 paper, A Mathematical Theory of Communication, is one of the most influential scientific publications of the 20th century. It gave engineers not just a formula but a way of thinking: information is a measurable quantity, noise is its enemy, and there is a precise, calculable limit to how much of it any channel can carry. Every modem is, in a sense, an attempt to get as close to the Shannon limit as possible.

SAGE: The First Real Modem (1958)

Through the 1940s, digital computing was emerging from university laboratories and wartime codebreaking projects. Computers were fast; communication networks were not. The two technologies existed in separate worlds. The event that brought them together was the Cold War.

In the early 1950s, the United States Air Force began constructing SAGE — the Semi-Automatic Ground Environment — a continent-wide air defence system designed to track Soviet bombers in real time. SAGE consisted of large AN/FSQ-7 computers at radar stations across North America, each of which needed to share data with the others continuously. The data had to travel over the only long-distance network that existed: the telephone system.

The device built to do this — designed by MIT's Lincoln Laboratory and manufactured by IBM — was a modem in every functional sense: it converted digital data from the computer into audio tones that could travel over a telephone line, and converted incoming tones back into digital data at the other end. It operated at 1300 bits per second and was the size of a large refrigerator.

The SAGE modem was never a commercial product. It was classified, expensive, and built for a single purpose. But it proved the concept: a computer could communicate with another computer over an ordinary telephone line. Everything that followed — the Bell 103, the Hayes Smartmodem, the 56k modem in your drawer — descends from this proof.

Key People

Epoch I

- » Samuel Morse (1791–1872) — telegraph & Morse code

- » Émile Baudot (1845–1903) — 5-bit code, symbol rate

- » Harry Nyquist (1889–1976) — bandwidth theorem

- » Claude Shannon (1916–2001) — information theory

Key Dates

1837 – 1950

- » 1837 — Morse patents telegraph

- » 1844 — Washington–Baltimore line

- » 1858 — First transatlantic cable (failed)

- » 1866 — Permanent transatlantic cable

- » 1870 — Baudot code proposed

- » 1920s — Teletype networks global

- » 1928 — Nyquist theorem published

- » 1948 — Shannon’s paper published

- » 1958 — SAGE modem operational